Connecting the SBB to Claude via MCP

By Tom Norton

March 27th, 2026

Update (April 2026): Since publishing this, I've renamed the project from

swiss-transport-mcptoswiss-public-transport-mcpto avoid a clash with an existing server of the same name that wraps theopentransportdata.swissAPIs. Mine is the one built ontransport.opendata.ch— different data source, different scope, but the original name was already taken. References below have been updated accordingly.

If you've been anywhere near the AI developer space recently, you've probably heard of MCP. The Model Context Protocol is Anthropic's open standard for giving LLMs access to external tools and data. Think of it as USB-C for AI: a universal way to plug capabilities into models like Claude.

I've been living in Switzerland, and the Swiss public transport system is incredible. Trains run on time (mostly), trams connect everything, and boats cruise across lakes on a schedule. There's a free, open API that exposes all of it at transport.opendata.ch. So naturally, I thought: what if Claude could just... use it?

That's how swiss-public-transport-mcp was born.

What It Actually Does

The server exposes four tools to Claude:

search_locationsfinds stations by name or GPS coordinatesplan_journeygets connections from A to B, with real-time delays, platforms, and occupancyget_stationboardshows live departure/arrival boards for any stationget_booking_linkgenerates a deep link straight to sbb.ch to buy tickets

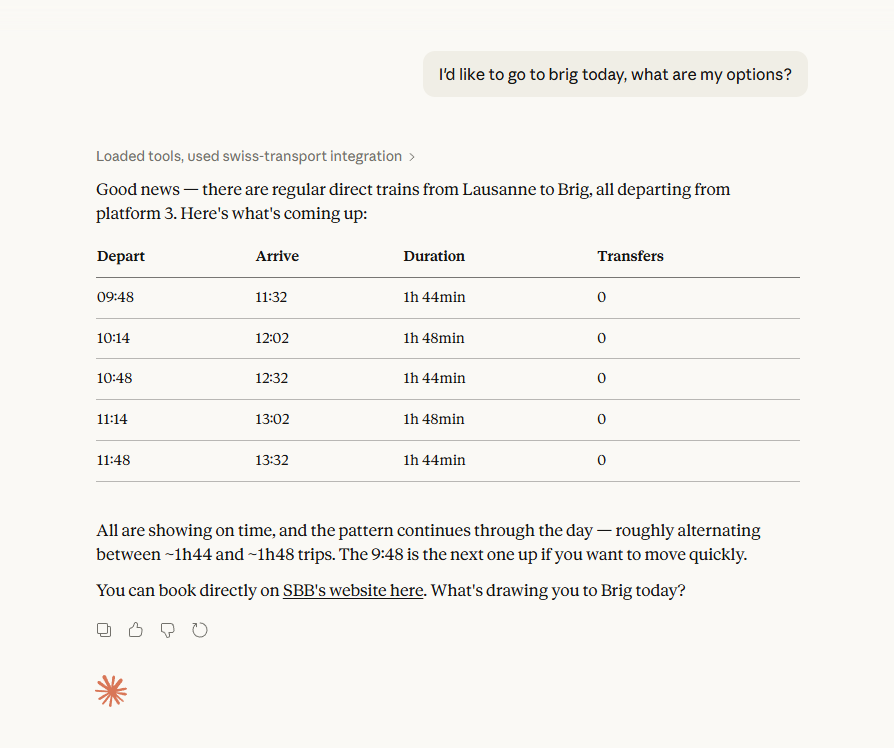

You can ask Claude "How do I get from Lausanne to Brig this morning?" and it'll give you actual, real-time train options, then hand you a link to book.

Here's what that looks like in practice:

Why Not Just Use the SBB App?

Fair question. The answer is context. When you're mid-conversation with Claude, maybe planning a trip or working out a multi-city itinerary, switching to a separate app breaks the flow. MCP lets the transport data live inside the conversation. Claude can reason about connections, compare options, and factor in things you've already told it. It's the difference between looking something up yourself and having a knowledgeable friend who just knows.

The Architecture

I wanted this to be more than a weekend hack. The codebase follows a clean layered architecture:

Tools (FastMCP) → Service → Client → Models → Formatters

Models are plain Python dataclasses. No heavy validation framework where it's not needed. Pydantic only shows up at the tool boundary, where it validates the inputs Claude sends.

The API client wraps transport.opendata.ch with retry logic using exponential backoff and jitter for rate limits and server errors. It also handles some fun API quirks, like the coordinates being reversed (x=latitude, y=longitude) and durations coming back in formats like "00d01:18:00".

Formatters turn raw data into human-readable text rather than JSON. This is deliberate. LLMs process structured text more naturally than nested JSON, and it makes the output immediately useful in conversation. Delays get a ⚠ symbol, walking segments show 🚶, and occupancy displays as ●●○ so you know which carriages to avoid.

The Fun Bits

A few things I particularly enjoyed building:

Deep linking to SBB. Generating booking URLs sounds simple until you realise SBB's URL format uses underscores instead of colons for times and has its own parameter conventions. Getting this right means Claude can end a journey plan with a link to actually book it, which feels genuinely useful.

Station ambiguity handling. Ask for "Basel" and the API returns multiple stations (Basel SBB, Basel Badischer Bahnhof, Basel SNCF...). Rather than failing, the service returns a clarification prompt listing the options so Claude can ask you which one you meant.

Real-time awareness. The server doesn't just give you the timetable. It shows predicted times vs. scheduled, computes delay minutes, flags cancellations, and reports per-class occupancy levels. It's the kind of information that makes the difference between catching your connection and watching it leave.

Getting Started

If you want to try it yourself, clone the repo and add it to your Claude config:

{ "mcpServers": { "swiss-public-transport": { "command": "uv", "args": ["run", "--directory", "/path/to/swiss-public-transport-mcp", "swiss-public-transport-mcp"] } } }

The whole thing is open source and MIT licensed.

What I Learned

Building an MCP server is surprisingly satisfying. The protocol is simple enough that you can get something working fast, but there's real depth in making the output good. Raw API data is rarely what an LLM, or a human, actually wants to read. The formatting layer ended up being where most of the thought went: deciding what to show, what to hide, and how to make a timetable scannable in a chat window.

If you've got a favourite API that you wish Claude could access, I'd genuinely encourage you to build an MCP server for it. The barrier to entry is low, and the result feels a bit like magic. Your AI assistant suddenly knowing things it couldn't know before.